金玉良缘易配而木石前盟难得|M1 Mac os(Apple Silicon)天生一对Python3开发环境搭建(集成深度学习框架Tensorflow/Pytorch)

笔者投入M1的怀抱已经有一段时间了,俗话说得好,但闻新人笑,不见旧人哭,Intel mac早已被束之高阁,而M1 mac已经不能用真香来形容了,简直就是“香透满堂金玉彩,扇遮半面桃花开!”,轻抚M1 mac那滑若柔荑的秒控键盘,别说996了,就是007,我们也能安之若素,也可以笑慰平生。好了,日常吹M1的环节结束,正所谓剑虽利,不厉不断,材虽美,不学不高。本次我们尝试在M1 Mac os 中搭建Python3的开发环境。

一般情况下,直接Python官网(python.org)下载最新的基于arm架构的python3.9即可,但是由于向下兼容等问题,我们尝试使用Python多版本管理软件conda,conda在业界有三大巨头分别是:Anaconda、Miniconda以及Condaforge,虽然都放出消息要适配M1芯片,但是目前最先放出稳定版的是Condaforge,进入下载页面:https://github.com/conda-forge/miniforge/#download 选择mac arm64位架构:

该文件并不是安装包,而是一个shell脚本,下载成功后,进入命令行目录:

cd ~/Downloads执行命令进行安装:

sudo bash ./Miniforge3-MacOSX-arm64.sh随后会有一些条款需要确认,这里按回车之后键入yes:

Welcome to Miniforge3 4.9.2-7

In order to continue the installation process, please review the license

agreement.

Please, press ENTER to continue

>>>

BSD 3-clause license

Copyright (c) 2019-2020, conda-forge

All rights reserved.

Redistribution and use in source and binary forms, with or without

modification, are permitted provided that the following conditions are met:

1. Redistributions of source code must retain the above copyright notice, this

list of conditions and the following disclaimer.

2. Redistributions in binary form must reproduce the above copyright notice,

this list of conditions and the following disclaimer in the documentation

and/or other materials provided with the distribution.

3. Neither the name of the copyright holder nor the names of its contributors

may be used to endorse or promote products derived from this software without

specific prior written permission.

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND

ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED

WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER OR CONTRIBUTORS BE LIABLE

FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL

DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR

SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER

CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY,

OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

Do you accept the license terms? [yes|no]

[no] >>> yes安装的默认版本还是3.9,会附带安装35个基础库,这样就不用我们自己手动安装了:

brotlipy 0.7.0 py39h46acfd9_1001 installed

bzip2 1.0.8 h27ca646_4 installed

ca-certificates 2020.12.5 h4653dfc_0 installed

certifi 2020.12.5 py39h2804cbe_1 installed

cffi 1.14.5 py39h702c04f_0 installed

chardet 4.0.0 py39h2804cbe_1 installed

conda 4.9.2 py39h2804cbe_0 installed

conda-package-handling 1.7.2 py39h51e6412_0 installed

cryptography 3.4.4 py39h6e07874_0 installed

idna 2.10 pyh9f0ad1d_0 installed

libcxx 11.0.1 h168391b_0 installed

libffi 3.3 h9f76cd9_2 installed

ncurses 6.2 h9aa5885_4 installed

openssl 1.1.1j h27ca646_0 installed

pip 21.0.1 pyhd8ed1ab_0 installed

pycosat 0.6.3 py39h46acfd9_1006 installed

pycparser 2.20 pyh9f0ad1d_2 installed

pyopenssl 20.0.1 pyhd8ed1ab_0 installed

pysocks 1.7.1 py39h2804cbe_3 installed

python 3.9.2 hcbd9b3a_0_cpython installed

python_abi 3.9 1_cp39 installed

readline 8.0 hc8eb9b7_2 installed

requests 2.25.1 pyhd3deb0d_0 installed

ruamel_yaml 0.15.80 py39h46acfd9_1004 installed

setuptools 49.6.0 py39h2804cbe_3 installed

six 1.15.0 pyh9f0ad1d_0 installed

sqlite 3.34.0 h6d56c25_0 installed

tk 8.6.10 hf7e6567_1 installed

tqdm 4.57.0 pyhd8ed1ab_0 installed

tzdata 2021a he74cb21_0 installed

urllib3 1.26.3 pyhd8ed1ab_0 installed

wheel 0.36.2 pyhd3deb0d_0 installed

xz 5.2.5 h642e427_1 installed

yaml 0.2.5 h642e427_0 installed

zlib 1.2.11 h31e879b_1009 installed然后编辑配置文件vim ~/.zshrc,加入如下内容(此处liuyue是笔者用户名,需改成你的Mac当前用户名):

path=('/Users/liuyue/miniforge3/bin' $path)

export PATH存盘之后执行命令:

source ~/.zshrc配置好环境变量之后,键入python3:

➜ ~ python3

Python 3.9.2 | packaged by conda-forge | (default, Feb 21 2021, 05:00:30)

[Clang 11.0.1 ] on darwin

Type "help", "copyright", "credits" or "license" for more information.

>>>可以看到已经使用conda安装的python版本了。

这里简单介绍一下conda命令:

conda info 可以查看当前conda的基本信息内核,平台,下载源以及目录位置:

➜ ~ conda info

active environment : None

user config file : /Users/liuyue/.condarc

populated config files : /Users/liuyue/miniforge3/.condarc

conda version : 4.9.2

conda-build version : not installed

python version : 3.9.2.final.0

virtual packages : __osx=11.2.2=0

__unix=0=0

__archspec=1=arm64

base environment : /Users/liuyue/miniforge3 (read only)

channel URLs : https://conda.anaconda.org/conda-forge/osx-arm64

https://conda.anaconda.org/conda-forge/noarch

package cache : /Users/liuyue/miniforge3/pkgs

/Users/liuyue/.conda/pkgs

envs directories : /Users/liuyue/.conda/envs

/Users/liuyue/miniforge3/envs

platform : osx-arm64

user-agent : conda/4.9.2 requests/2.25.1 CPython/3.9.2 Darwin/20.3.0 OSX/11.2.2

UID:GID : 502:20

netrc file : None

offline mode : False由于一些众所周知的学术问题,我们需要配置一下国内下载源:

conda config --add channels https://mirrors.ustc.edu.cn/anaconda/pkgs/main/

conda config --add channels https://mirrors.ustc.edu.cn/anaconda/pkgs/free/

conda config --add channels https://mirrors.ustc.edu.cn/anaconda/cloud/conda-forge/

conda config --add channels https://mirrors.ustc.edu.cn/anaconda/cloud/msys2/

conda config --add channels https://mirrors.ustc.edu.cn/anaconda/cloud/bioconda/

conda config --add channels https://mirrors.ustc.edu.cn/anaconda/cloud/menpo/

conda config --set show_channel_urls yes随后查看当前下载源:

conda config --show可以看到国内源已经被添加进去了:

channel_priority: flexible

channels:

- https://mirrors.ustc.edu.cn/anaconda/cloud/menpo/

- https://mirrors.ustc.edu.cn/anaconda/cloud/bioconda/

- https://mirrors.ustc.edu.cn/anaconda/cloud/msys2/

- https://mirrors.ustc.edu.cn/anaconda/cloud/conda-forge/

- https://mirrors.ustc.edu.cn/anaconda/pkgs/free/

- https://mirrors.ustc.edu.cn/anaconda/pkgs/main/

- defaults

- conda-forge

client_ssl_cert: None其他的一些conda常用命令:

1. conda --version #查看conda版本,验证是否安装

2. conda update conda #更新至最新版本,也会更新其它相关包

3. conda update --all #更新所有包

4. conda update package_name #更新指定的包

5. conda create -n env_name package_name #创建名为env_name的新环境,并在该环境下安装名为package_name 的包,可以指定新环境的版本号,例如:conda create -n python2 python=python2.7 numpy pandas,创建了python2环境,python版本为2.7,同时还安装了numpy pandas包

6. source activate env_name #切换至env_name环境

7. source deactivate #退出环境

8. conda info -e #显示所有已经创建的环境

9. conda create --name new_env_name --clone old_env_name #复制old_env_name为new_env_name

10. conda remove --name env_name –all #删除环境

11. conda list #查看所有已经安装的包

12. conda install package_name #在当前环境中安装包

13. conda install --name env_name package_name #在指定环境中安装包

14. conda remove -- name env_name package #删除指定环境中的包

15. conda remove package #删除当前环境中的包

16. conda create -n tensorflow_env tensorflow

conda activate tensorflow_env #conda 安装tensorflow的CPU版本

17. conda create -n tensorflow_gpuenv tensorflow-gpu

conda activate tensorflow_gpuenv #conda安装tensorflow的GPU版本

18. conda env remove -n env_name #采用第10条的方法删除环境失败时,可采用这种方法

接着我们来尝试集成深度学习框架Tensorflow,由于目前默认是3.9,我们使用conda创建一个3.8的虚拟开发环境:

sudo conda create -n py38 python=3.8安装成功后,输入命令:

conda info -e就可以查看当前conda安装的所有版本:

➜ ~ conda info -e

# conda environments:

#

base * /Users/liuyue/miniforge3

py38 /Users/liuyue/miniforge3/envs/py38可以看到一个默认的3.9环境,和新装的3.8环境,星号代表当前所处的环境,这里我们切换到3.8:

conda activate py38此时环境已经切换到3.8:

(py38) ➜ ~ conda activate py38

(py38) ➜ ~ conda info -e

# conda environments:

#

base /Users/liuyue/miniforge3

py38 * /Users/liuyue/miniforge3/envs/py38

(py38) ➜ ~下面开启深度学习框架Tensorflow之旅,由于苹果对m1芯片单独做了适配,所以不能用以前的pip方式直接进行安装,需要单独下载文件:https://github.91chifun.workers.dev//https://github.com/apple/tensorflow_macos/releases/download/v0.1alpha1/tensorflow_macos-0.1alpha1.tar.gz

解压文件:

tar -zxvf tensorflow_macos-0.1alpha1.tar.gz解压后进入目录(一定要进入arm64的文件内):

cd tensorflow_macos/arm64执行命令利用下载的arm64内核安装包进行安装:

pip install --force pip==20.2.4 wheel setuptools cached-property six

pip install --upgrade --no-dependencies --force numpy-1.18.5-cp38-cp38-macosx_11_0_arm64.whl grpcio-1.33.2-cp38-cp38-macosx_11_0_arm64.whl h5py-2.10.0-cp38-cp38-macosx_11_0_arm64.whl tensorflow_addons-0.11.2+mlcompute-cp38-cp38-macosx_11_0_arm64.whl

pip install absl-py astunparse flatbuffers gast google_pasta keras_preprocessing opt_einsum protobuf tensorflow_estimator termcolor typing_extensions wrapt wheel tensorboard typeguard

pip install --upgrade --force --no-dependencies tensorflow_macos-0.1a1-cp38-cp38-macosx_11_0_arm64.whl安装成功后,测试一下:

(py38) ➜ arm64 python

Python 3.8.8 | packaged by conda-forge | (default, Feb 20 2021, 15:50:57)

[Clang 11.0.1 ] on darwin

Type "help", "copyright", "credits" or "license" for more information.

>>> import tensorflow

>>>没有任何问题。

下面我们来测试一下M1通过Tensorflow训练模型的效率,还记得衣香鬓影的“机械姬”吗:人工智能不过尔尔,基于Python3深度学习库Keras/TensorFlow打造属于自己的聊天机器人(ChatRobot)

编写my_chat.py:

import nltk

import ssl

from nltk.stem.lancaster import LancasterStemmer

stemmer = LancasterStemmer()

import numpy as np

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Activation, Dropout

from tensorflow.keras.optimizers import SGD

import pandas as pd

import pickle

import random

words = []

classes = []

documents = []

ignore_words = ['?']

# loop through each sentence in our intents patterns

intents = {"intents": [

{"tag": "打招呼",

"patterns": ["你好", "您好", "请问", "有人吗", "师傅","不好意思","美女","帅哥","靓妹"],

"responses": ["您好", "又是您啊", "吃了么您内","您有事吗"],

"context": [""]

},

{"tag": "告别",

"patterns": ["再见", "拜拜", "88", "回见", "回头见"],

"responses": ["再见", "一路顺风", "下次见", "拜拜了您内"],

"context": [""]

},

]

}

for intent in intents['intents']:

for pattern in intent['patterns']:

# tokenize each word in the sentence

w = nltk.word_tokenize(pattern)

# add to our words list

words.extend(w)

# add to documents in our corpus

documents.append((w, intent['tag']))

# add to our classes list

if intent['tag'] not in classes:

classes.append(intent['tag'])

# stem and lower each word and remove duplicates

words = [stemmer.stem(w.lower()) for w in words if w not in ignore_words]

words = sorted(list(set(words)))

# sort classes

classes = sorted(list(set(classes)))

# documents = combination between patterns and intents

# print (len(documents), "documents")

# # classes = intents

# print (len(classes), "语境", classes)

# # words = all words, vocabulary

# print (len(words), "词数", words)

# create our training data

training = []

# create an empty array for our output

output_empty = [0] * len(classes)

# training set, bag of words for each sentence

for doc in documents:

# initialize our bag of words

bag = []

pattern_words = doc[0]

pattern_words = [stemmer.stem(word.lower()) for word in pattern_words]

for w in words:

bag.append(1) if w in pattern_words else bag.append(0)

output_row = list(output_empty)

output_row[classes.index(doc[1])] = 1

training.append([bag, output_row])

random.shuffle(training)

training = np.array(training)

train_x = list(training[:,0])

train_y = list(training[:,1])

model = Sequential()

model.add(Dense(128, input_shape=(len(train_x[0]),), activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(64, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(len(train_y[0]), activation='softmax'))

sgd = SGD(lr=0.01, decay=1e-6, momentum=0.9, nesterov=True)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])

model.fit(np.array(train_x), np.array(train_y), epochs=200, batch_size=5, verbose=1)

def clean_up_sentence(sentence):

# tokenize the pattern - split words into array

sentence_words = nltk.word_tokenize(sentence)

# stem each word - create short form for word

sentence_words = [stemmer.stem(word.lower()) for word in sentence_words]

return sentence_words

# return bag of words array: 0 or 1 for each word in the bag that exists in the sentence

def bow(sentence, words, show_details=True):

# tokenize the pattern

sentence_words = clean_up_sentence(sentence)

# bag of words - matrix of N words, vocabulary matrix

bag = [0]*len(words)

for s in sentence_words:

for i,w in enumerate(words):

if w == s:

# assign 1 if current word is in the vocabulary position

bag[i] = 1

if show_details:

print ("found in bag: %s" % w)

return(np.array(bag))

def classify_local(sentence):

ERROR_THRESHOLD = 0.25

# generate probabilities from the model

input_data = pd.DataFrame([bow(sentence, words)], dtype=float, index=['input'])

results = model.predict([input_data])[0]

# filter out predictions below a threshold, and provide intent index

results = [[i,r] for i,r in enumerate(results) if r>ERROR_THRESHOLD]

# sort by strength of probability

results.sort(key=lambda x: x[1], reverse=True)

return_list = []

for r in results:

return_list.append((classes[r[0]], str(r[1])))

# return tuple of intent and probability

return return_list

p = bow("你好", words)

print (p)

print(classify_local('请问'))返回结果:

(py38) ➜ mytornado git:(master) ✗ python3 test_mychat.py

2021-03-03 22:43:21.059383: I tensorflow/compiler/mlir/mlir_graph_optimization_pass.cc:116] None of the MLIR optimization passes are enabled (registered 2)

2021-03-03 22:43:21.059529: W tensorflow/core/platform/profile_utils/cpu_utils.cc:126] Failed to get CPU frequency: 0 Hz

Epoch 1/200

3/3 [==============================] - 0s 570us/step - loss: 0.6966 - accuracy: 0.5750

Epoch 2/200

3/3 [==============================] - 0s 482us/step - loss: 0.6913 - accuracy: 0.4857

Epoch 3/200

3/3 [==============================] - 0s 454us/step - loss: 0.6795 - accuracy: 0.4750

Epoch 4/200

3/3 [==============================] - 0s 434us/step - loss: 0.6913 - accuracy: 0.4750

Epoch 5/200

3/3 [==============================] - 0s 417us/step - loss: 0.6563 - accuracy: 0.5107

Epoch 6/200

3/3 [==============================] - 0s 454us/step - loss: 0.6775 - accuracy: 0.5714

Epoch 7/200

3/3 [==============================] - 0s 418us/step - loss: 0.6582 - accuracy: 0.6964

Epoch 8/200

3/3 [==============================] - 0s 487us/step - loss: 0.6418 - accuracy: 0.8071

Epoch 9/200

3/3 [==============================] - 0s 504us/step - loss: 0.6055 - accuracy: 0.6964

Epoch 10/200

3/3 [==============================] - 0s 457us/step - loss: 0.5933 - accuracy: 0.6964

Epoch 11/200

3/3 [==============================] - 0s 392us/step - loss: 0.6679 - accuracy: 0.5714

Epoch 12/200

3/3 [==============================] - 0s 427us/step - loss: 0.6060 - accuracy: 0.7464

Epoch 13/200

3/3 [==============================] - 0s 425us/step - loss: 0.6677 - accuracy: 0.5964

Epoch 14/200

3/3 [==============================] - 0s 420us/step - loss: 0.6208 - accuracy: 0.6214

Epoch 15/200

3/3 [==============================] - 0s 401us/step - loss: 0.6315 - accuracy: 0.6714

Epoch 16/200

3/3 [==============================] - 0s 401us/step - loss: 0.6718 - accuracy: 0.6464

Epoch 17/200

3/3 [==============================] - 0s 386us/step - loss: 0.6407 - accuracy: 0.6714

Epoch 18/200

3/3 [==============================] - 0s 505us/step - loss: 0.6031 - accuracy: 0.6464

Epoch 19/200

3/3 [==============================] - 0s 407us/step - loss: 0.6245 - accuracy: 0.6214

Epoch 20/200

3/3 [==============================] - 0s 422us/step - loss: 0.5805 - accuracy: 0.6964

Epoch 21/200

3/3 [==============================] - 0s 379us/step - loss: 0.6923 - accuracy: 0.5464

Epoch 22/200

3/3 [==============================] - 0s 396us/step - loss: 0.6383 - accuracy: 0.5714

Epoch 23/200

3/3 [==============================] - 0s 427us/step - loss: 0.6628 - accuracy: 0.5714

Epoch 24/200

3/3 [==============================] - 0s 579us/step - loss: 0.6361 - accuracy: 0.5964

Epoch 25/200

3/3 [==============================] - 0s 378us/step - loss: 0.5632 - accuracy: 0.7214

Epoch 26/200

3/3 [==============================] - 0s 387us/step - loss: 0.6851 - accuracy: 0.5214

Epoch 27/200

3/3 [==============================] - 0s 393us/step - loss: 0.6012 - accuracy: 0.6214

Epoch 28/200

3/3 [==============================] - 0s 392us/step - loss: 0.6470 - accuracy: 0.5964

Epoch 29/200

3/3 [==============================] - 0s 348us/step - loss: 0.6346 - accuracy: 0.6214

Epoch 30/200

3/3 [==============================] - 0s 362us/step - loss: 0.6350 - accuracy: 0.4964

Epoch 31/200

3/3 [==============================] - 0s 369us/step - loss: 0.5842 - accuracy: 0.5964

Epoch 32/200

3/3 [==============================] - 0s 481us/step - loss: 0.5279 - accuracy: 0.7214

Epoch 33/200

3/3 [==============================] - 0s 439us/step - loss: 0.5956 - accuracy: 0.7321

Epoch 34/200

3/3 [==============================] - 0s 355us/step - loss: 0.5570 - accuracy: 0.6964

Epoch 35/200

3/3 [==============================] - 0s 385us/step - loss: 0.5546 - accuracy: 0.8071

Epoch 36/200

3/3 [==============================] - 0s 375us/step - loss: 0.5616 - accuracy: 0.6714

Epoch 37/200

3/3 [==============================] - 0s 379us/step - loss: 0.6955 - accuracy: 0.6464

Epoch 38/200

3/3 [==============================] - 0s 389us/step - loss: 0.6089 - accuracy: 0.7321

Epoch 39/200

3/3 [==============================] - 0s 375us/step - loss: 0.5377 - accuracy: 0.6714

Epoch 40/200

3/3 [==============================] - 0s 392us/step - loss: 0.6224 - accuracy: 0.7179

Epoch 41/200

3/3 [==============================] - 0s 379us/step - loss: 0.6234 - accuracy: 0.5464

Epoch 42/200

3/3 [==============================] - 0s 411us/step - loss: 0.5224 - accuracy: 0.8321

Epoch 43/200

3/3 [==============================] - 0s 386us/step - loss: 0.5848 - accuracy: 0.5964

Epoch 44/200

3/3 [==============================] - 0s 401us/step - loss: 0.4620 - accuracy: 0.8679

Epoch 45/200

3/3 [==============================] - 0s 365us/step - loss: 0.4664 - accuracy: 0.8071

Epoch 46/200

3/3 [==============================] - 0s 367us/step - loss: 0.5904 - accuracy: 0.7679

Epoch 47/200

3/3 [==============================] - 0s 359us/step - loss: 0.5111 - accuracy: 0.7929

Epoch 48/200

3/3 [==============================] - 0s 363us/step - loss: 0.4712 - accuracy: 0.8679

Epoch 49/200

3/3 [==============================] - 0s 401us/step - loss: 0.5601 - accuracy: 0.8071

Epoch 50/200

3/3 [==============================] - 0s 429us/step - loss: 0.4884 - accuracy: 0.7929

Epoch 51/200

3/3 [==============================] - 0s 377us/step - loss: 0.5137 - accuracy: 0.8286

Epoch 52/200

3/3 [==============================] - 0s 368us/step - loss: 0.5475 - accuracy: 0.8286

Epoch 53/200

3/3 [==============================] - 0s 592us/step - loss: 0.4077 - accuracy: 0.8536

Epoch 54/200

3/3 [==============================] - 0s 400us/step - loss: 0.5367 - accuracy: 0.8179

Epoch 55/200

3/3 [==============================] - 0s 399us/step - loss: 0.5288 - accuracy: 0.8429

Epoch 56/200

3/3 [==============================] - 0s 367us/step - loss: 0.5775 - accuracy: 0.6964

Epoch 57/200

3/3 [==============================] - 0s 372us/step - loss: 0.5680 - accuracy: 0.6821

Epoch 58/200

3/3 [==============================] - 0s 360us/step - loss: 0.5164 - accuracy: 0.7321

Epoch 59/200

3/3 [==============================] - 0s 364us/step - loss: 0.5334 - accuracy: 0.6571

Epoch 60/200

3/3 [==============================] - 0s 358us/step - loss: 0.3858 - accuracy: 0.9036

Epoch 61/200

3/3 [==============================] - 0s 356us/step - loss: 0.4313 - accuracy: 0.8679

Epoch 62/200

3/3 [==============================] - 0s 373us/step - loss: 0.5017 - accuracy: 0.8429

Epoch 63/200

3/3 [==============================] - 0s 346us/step - loss: 0.4649 - accuracy: 0.8429

Epoch 64/200

3/3 [==============================] - 0s 397us/step - loss: 0.3804 - accuracy: 0.8893

Epoch 65/200

3/3 [==============================] - 0s 361us/step - loss: 0.5030 - accuracy: 0.7929

Epoch 66/200

3/3 [==============================] - 0s 372us/step - loss: 0.3958 - accuracy: 0.9286

Epoch 67/200

3/3 [==============================] - 0s 345us/step - loss: 0.4240 - accuracy: 0.8536

Epoch 68/200

3/3 [==============================] - 0s 360us/step - loss: 0.4651 - accuracy: 0.7929

Epoch 69/200

3/3 [==============================] - 0s 376us/step - loss: 0.4687 - accuracy: 0.7571

Epoch 70/200

3/3 [==============================] - 0s 398us/step - loss: 0.4660 - accuracy: 0.8429

Epoch 71/200

3/3 [==============================] - 0s 368us/step - loss: 0.3960 - accuracy: 0.9393

Epoch 72/200

3/3 [==============================] - 0s 355us/step - loss: 0.5523 - accuracy: 0.6071

Epoch 73/200

3/3 [==============================] - 0s 361us/step - loss: 0.5266 - accuracy: 0.7821

Epoch 74/200

3/3 [==============================] - 0s 371us/step - loss: 0.4245 - accuracy: 0.9643

Epoch 75/200

3/3 [==============================] - 0s 367us/step - loss: 0.5024 - accuracy: 0.7786

Epoch 76/200

3/3 [==============================] - 0s 453us/step - loss: 0.3419 - accuracy: 0.9393

Epoch 77/200

3/3 [==============================] - 0s 405us/step - loss: 0.4930 - accuracy: 0.7429

Epoch 78/200

3/3 [==============================] - 0s 672us/step - loss: 0.3443 - accuracy: 0.9036

Epoch 79/200

3/3 [==============================] - 0s 386us/step - loss: 0.3864 - accuracy: 0.8893

Epoch 80/200

3/3 [==============================] - 0s 386us/step - loss: 0.3863 - accuracy: 0.9286

Epoch 81/200

3/3 [==============================] - 0s 391us/step - loss: 0.2771 - accuracy: 0.8679

Epoch 82/200

3/3 [==============================] - 0s 370us/step - loss: 0.6083 - accuracy: 0.5571

Epoch 83/200

3/3 [==============================] - 0s 387us/step - loss: 0.2801 - accuracy: 0.9393

Epoch 84/200

3/3 [==============================] - 0s 357us/step - loss: 0.2483 - accuracy: 0.9286

Epoch 85/200

3/3 [==============================] - 0s 355us/step - loss: 0.2511 - accuracy: 0.9643

Epoch 86/200

3/3 [==============================] - 0s 339us/step - loss: 0.3410 - accuracy: 0.8893

Epoch 87/200

3/3 [==============================] - 0s 361us/step - loss: 0.3432 - accuracy: 0.9036

Epoch 88/200

3/3 [==============================] - 0s 347us/step - loss: 0.3819 - accuracy: 0.8893

Epoch 89/200

3/3 [==============================] - 0s 361us/step - loss: 0.5142 - accuracy: 0.7179

Epoch 90/200

3/3 [==============================] - 0s 502us/step - loss: 0.3055 - accuracy: 0.9393

Epoch 91/200

3/3 [==============================] - 0s 377us/step - loss: 0.3144 - accuracy: 0.8536

Epoch 92/200

3/3 [==============================] - 0s 376us/step - loss: 0.3712 - accuracy: 0.9036

Epoch 93/200

3/3 [==============================] - 0s 389us/step - loss: 0.1974 - accuracy: 0.9393

Epoch 94/200

3/3 [==============================] - 0s 365us/step - loss: 0.3128 - accuracy: 0.9393

Epoch 95/200

3/3 [==============================] - 0s 376us/step - loss: 0.2194 - accuracy: 1.0000

Epoch 96/200

3/3 [==============================] - 0s 377us/step - loss: 0.1994 - accuracy: 1.0000

Epoch 97/200

3/3 [==============================] - 0s 360us/step - loss: 0.1734 - accuracy: 0.9643

Epoch 98/200

3/3 [==============================] - 0s 367us/step - loss: 0.1786 - accuracy: 1.0000

Epoch 99/200

3/3 [==============================] - 0s 358us/step - loss: 0.4158 - accuracy: 0.8286

Epoch 100/200

3/3 [==============================] - 0s 354us/step - loss: 0.3131 - accuracy: 0.7571

Epoch 101/200

3/3 [==============================] - 0s 350us/step - loss: 0.1953 - accuracy: 0.8893

Epoch 102/200

3/3 [==============================] - 0s 403us/step - loss: 0.2577 - accuracy: 0.8429

Epoch 103/200

3/3 [==============================] - 0s 417us/step - loss: 0.2648 - accuracy: 0.8893

Epoch 104/200

3/3 [==============================] - 0s 377us/step - loss: 0.2901 - accuracy: 0.8286

Epoch 105/200

3/3 [==============================] - 0s 383us/step - loss: 0.2822 - accuracy: 0.9393

Epoch 106/200

3/3 [==============================] - 0s 381us/step - loss: 0.2837 - accuracy: 0.9036

Epoch 107/200

3/3 [==============================] - 0s 382us/step - loss: 0.3064 - accuracy: 0.8536

Epoch 108/200

3/3 [==============================] - 0s 352us/step - loss: 0.3376 - accuracy: 0.9036

Epoch 109/200

3/3 [==============================] - 0s 376us/step - loss: 0.3412 - accuracy: 0.8536

Epoch 110/200

3/3 [==============================] - 0s 363us/step - loss: 0.1718 - accuracy: 1.0000

Epoch 111/200

3/3 [==============================] - 0s 347us/step - loss: 0.1899 - accuracy: 0.8786

Epoch 112/200

3/3 [==============================] - 0s 363us/step - loss: 0.2352 - accuracy: 0.8286

Epoch 113/200

3/3 [==============================] - 0s 373us/step - loss: 0.1378 - accuracy: 1.0000

Epoch 114/200

3/3 [==============================] - 0s 353us/step - loss: 0.4288 - accuracy: 0.7071

Epoch 115/200

3/3 [==============================] - 0s 456us/step - loss: 0.4202 - accuracy: 0.6821

Epoch 116/200

3/3 [==============================] - 0s 382us/step - loss: 0.2962 - accuracy: 0.8893

Epoch 117/200

3/3 [==============================] - 0s 394us/step - loss: 0.2571 - accuracy: 0.8893

Epoch 118/200

3/3 [==============================] - 0s 365us/step - loss: 0.2697 - accuracy: 1.0000

Epoch 119/200

3/3 [==============================] - 0s 358us/step - loss: 0.3102 - accuracy: 0.9036

Epoch 120/200

3/3 [==============================] - 0s 367us/step - loss: 0.2928 - accuracy: 0.8286

Epoch 121/200

3/3 [==============================] - 0s 374us/step - loss: 0.3157 - accuracy: 0.8286

Epoch 122/200

3/3 [==============================] - 0s 381us/step - loss: 0.3920 - accuracy: 0.7786

Epoch 123/200

3/3 [==============================] - 0s 335us/step - loss: 0.2090 - accuracy: 0.9036

Epoch 124/200

3/3 [==============================] - 0s 368us/step - loss: 0.5079 - accuracy: 0.7786

Epoch 125/200

3/3 [==============================] - 0s 337us/step - loss: 0.1900 - accuracy: 0.9393

Epoch 126/200

3/3 [==============================] - 0s 339us/step - loss: 0.2047 - accuracy: 0.9643

Epoch 127/200

3/3 [==============================] - 0s 479us/step - loss: 0.3705 - accuracy: 0.7679

Epoch 128/200

3/3 [==============================] - 0s 390us/step - loss: 0.1850 - accuracy: 0.9036

Epoch 129/200

3/3 [==============================] - 0s 642us/step - loss: 0.1594 - accuracy: 0.9393

Epoch 130/200

3/3 [==============================] - 0s 373us/step - loss: 0.2010 - accuracy: 0.8893

Epoch 131/200

3/3 [==============================] - 0s 369us/step - loss: 0.0849 - accuracy: 1.0000

Epoch 132/200

3/3 [==============================] - 0s 349us/step - loss: 0.1145 - accuracy: 1.0000

Epoch 133/200

3/3 [==============================] - 0s 360us/step - loss: 0.1796 - accuracy: 1.0000

Epoch 134/200

3/3 [==============================] - 0s 371us/step - loss: 0.2363 - accuracy: 0.8536

Epoch 135/200

3/3 [==============================] - 0s 386us/step - loss: 0.1922 - accuracy: 0.9393

Epoch 136/200

3/3 [==============================] - 0s 369us/step - loss: 0.3595 - accuracy: 0.7679

Epoch 137/200

3/3 [==============================] - 0s 369us/step - loss: 0.1506 - accuracy: 0.8893

Epoch 138/200

3/3 [==============================] - 0s 377us/step - loss: 0.2471 - accuracy: 0.8536

Epoch 139/200

3/3 [==============================] - 0s 417us/step - loss: 0.1768 - accuracy: 0.8536

Epoch 140/200

3/3 [==============================] - 0s 400us/step - loss: 0.2112 - accuracy: 0.9393

Epoch 141/200

3/3 [==============================] - 0s 377us/step - loss: 0.3652 - accuracy: 0.7179

Epoch 142/200

3/3 [==============================] - 0s 364us/step - loss: 0.3007 - accuracy: 0.8429

Epoch 143/200

3/3 [==============================] - 0s 361us/step - loss: 0.0518 - accuracy: 1.0000

Epoch 144/200

3/3 [==============================] - 0s 373us/step - loss: 0.2144 - accuracy: 0.8286

Epoch 145/200

3/3 [==============================] - 0s 353us/step - loss: 0.0888 - accuracy: 1.0000

Epoch 146/200

3/3 [==============================] - 0s 361us/step - loss: 0.1267 - accuracy: 1.0000

Epoch 147/200

3/3 [==============================] - 0s 341us/step - loss: 0.0321 - accuracy: 1.0000

Epoch 148/200

3/3 [==============================] - 0s 358us/step - loss: 0.0860 - accuracy: 1.0000

Epoch 149/200

3/3 [==============================] - 0s 375us/step - loss: 0.2151 - accuracy: 0.8893

Epoch 150/200

3/3 [==============================] - 0s 351us/step - loss: 0.1592 - accuracy: 1.0000

Epoch 151/200

3/3 [==============================] - 0s 531us/step - loss: 0.1450 - accuracy: 0.8786

Epoch 152/200

3/3 [==============================] - 0s 392us/step - loss: 0.1813 - accuracy: 0.9036

Epoch 153/200

3/3 [==============================] - 0s 404us/step - loss: 0.1197 - accuracy: 1.0000

Epoch 154/200

3/3 [==============================] - 0s 367us/step - loss: 0.0930 - accuracy: 1.0000

Epoch 155/200

3/3 [==============================] - 0s 580us/step - loss: 0.2587 - accuracy: 0.8893

Epoch 156/200

3/3 [==============================] - 0s 383us/step - loss: 0.0742 - accuracy: 1.0000

Epoch 157/200

3/3 [==============================] - 0s 353us/step - loss: 0.1197 - accuracy: 0.9643

Epoch 158/200

3/3 [==============================] - 0s 371us/step - loss: 0.1716 - accuracy: 0.8536

Epoch 159/200

3/3 [==============================] - 0s 337us/step - loss: 0.1300 - accuracy: 0.9643

Epoch 160/200

3/3 [==============================] - 0s 347us/step - loss: 0.1439 - accuracy: 0.9393

Epoch 161/200

3/3 [==============================] - 0s 366us/step - loss: 0.2597 - accuracy: 0.9393

Epoch 162/200

3/3 [==============================] - 0s 345us/step - loss: 0.1605 - accuracy: 0.8893

Epoch 163/200

3/3 [==============================] - 0s 468us/step - loss: 0.0437 - accuracy: 1.0000

Epoch 164/200

3/3 [==============================] - 0s 372us/step - loss: 0.0376 - accuracy: 1.0000

Epoch 165/200

3/3 [==============================] - 0s 391us/step - loss: 0.0474 - accuracy: 1.0000

Epoch 166/200

3/3 [==============================] - 0s 378us/step - loss: 0.3225 - accuracy: 0.7786

Epoch 167/200

3/3 [==============================] - 0s 368us/step - loss: 0.0770 - accuracy: 1.0000

Epoch 168/200

3/3 [==============================] - 0s 367us/step - loss: 0.5629 - accuracy: 0.7786

Epoch 169/200

3/3 [==============================] - 0s 359us/step - loss: 0.0177 - accuracy: 1.0000

Epoch 170/200

3/3 [==============================] - 0s 370us/step - loss: 0.1167 - accuracy: 1.0000

Epoch 171/200

3/3 [==============================] - 0s 349us/step - loss: 0.1313 - accuracy: 1.0000

Epoch 172/200

3/3 [==============================] - 0s 337us/step - loss: 0.0852 - accuracy: 0.9393

Epoch 173/200

3/3 [==============================] - 0s 375us/step - loss: 0.0545 - accuracy: 1.0000

Epoch 174/200

3/3 [==============================] - 0s 354us/step - loss: 0.0674 - accuracy: 0.9643

Epoch 175/200

3/3 [==============================] - 0s 355us/step - loss: 0.0911 - accuracy: 1.0000

Epoch 176/200

3/3 [==============================] - 0s 404us/step - loss: 0.0980 - accuracy: 0.9393

Epoch 177/200

3/3 [==============================] - 0s 396us/step - loss: 0.0465 - accuracy: 1.0000

Epoch 178/200

3/3 [==============================] - 0s 403us/step - loss: 0.1117 - accuracy: 0.9393

Epoch 179/200

3/3 [==============================] - 0s 373us/step - loss: 0.0415 - accuracy: 1.0000

Epoch 180/200

3/3 [==============================] - 0s 369us/step - loss: 0.0825 - accuracy: 1.0000

Epoch 181/200

3/3 [==============================] - 0s 425us/step - loss: 0.0378 - accuracy: 1.0000

Epoch 182/200

3/3 [==============================] - 0s 381us/step - loss: 0.1155 - accuracy: 0.9393

Epoch 183/200

3/3 [==============================] - 0s 354us/step - loss: 0.0207 - accuracy: 1.0000

Epoch 184/200

3/3 [==============================] - 0s 346us/step - loss: 0.0344 - accuracy: 1.0000

Epoch 185/200

3/3 [==============================] - 0s 379us/step - loss: 0.0984 - accuracy: 0.9393

Epoch 186/200

3/3 [==============================] - 0s 360us/step - loss: 0.1508 - accuracy: 0.8536

Epoch 187/200

3/3 [==============================] - 0s 361us/step - loss: 0.0463 - accuracy: 1.0000

Epoch 188/200

3/3 [==============================] - 0s 358us/step - loss: 0.0476 - accuracy: 0.9643

Epoch 189/200

3/3 [==============================] - 0s 379us/step - loss: 0.1592 - accuracy: 1.0000

Epoch 190/200

3/3 [==============================] - 0s 387us/step - loss: 0.0071 - accuracy: 1.0000

Epoch 191/200

3/3 [==============================] - 0s 405us/step - loss: 0.0527 - accuracy: 1.0000

Epoch 192/200

3/3 [==============================] - 0s 401us/step - loss: 0.0874 - accuracy: 0.9393

Epoch 193/200

3/3 [==============================] - 0s 355us/step - loss: 0.0199 - accuracy: 1.0000

Epoch 194/200

3/3 [==============================] - 0s 373us/step - loss: 0.1299 - accuracy: 0.9643

Epoch 195/200

3/3 [==============================] - 0s 360us/step - loss: 0.0929 - accuracy: 1.0000

Epoch 196/200

3/3 [==============================] - 0s 380us/step - loss: 0.0265 - accuracy: 1.0000

Epoch 197/200

3/3 [==============================] - 0s 358us/step - loss: 0.0843 - accuracy: 1.0000

Epoch 198/200

3/3 [==============================] - 0s 354us/step - loss: 0.0925 - accuracy: 1.0000

Epoch 199/200

3/3 [==============================] - 0s 327us/step - loss: 0.0770 - accuracy: 1.0000

Epoch 200/200

3/3 [==============================] - 0s 561us/step - loss: 0.0311 - accuracy: 1.0000

found in bag: 你好

[0 0 1 0 0 0 0 0 0 0 0 0 0 0]

found in bag: 请问

[('打招呼', '0.998965')]瞬间执行完毕,秒杀intel芯片的mac,怎一个香字了得!

接下来,尝试安装另外一个在业界名声煊赫的深度学习框架Pytorch!

由于当前arm64架构的只支持3.9版本,所以我们来创建一个虚拟空间:

sudo conda create -n pytorch numpy matplotlib pandas python=3.9这里提前将需要的基础库都一一安装,因为如果不在创建虚拟空间时提前安装,之后使用pip是安装不上的,安装成功后,激活环境:

(pytorch) ➜ conda activate pytorch

(pytorch) ➜随后下载arm64版本的pytorch安装包:https://github.com/wizyoung/AppleSiliconSelfBuilds/blob/main/builds/torch-1.8.0a0-cp39-cp39-macosx_11_0_arm64.whl

下载成功后,执行安装命令:

sudo pip install torch-1.8.0a0-cp39-cp39-macosx_11_0_arm64.whl让我们来试试Pytorch在M1芯片加持后的性能,编写test_torch.py:

from tqdm import tqdm

import torch

@torch.jit.script

def foo():

x = torch.ones((1024 * 12, 1024 * 12), dtype=torch.float32)

y = torch.ones((1024 * 12, 1024 * 12), dtype=torch.float32)

z = x + y

return z

if __name__ == '__main__':

z0 = None

for _ in tqdm(range(10000000000)):

zz = foo()

if z0 is None:

z0 = zz

else:

z0 += zz矩阵加法逻辑运算达到了45 it/s,torch短时间内适配M1芯片,如此性能已经非常惊艳了。

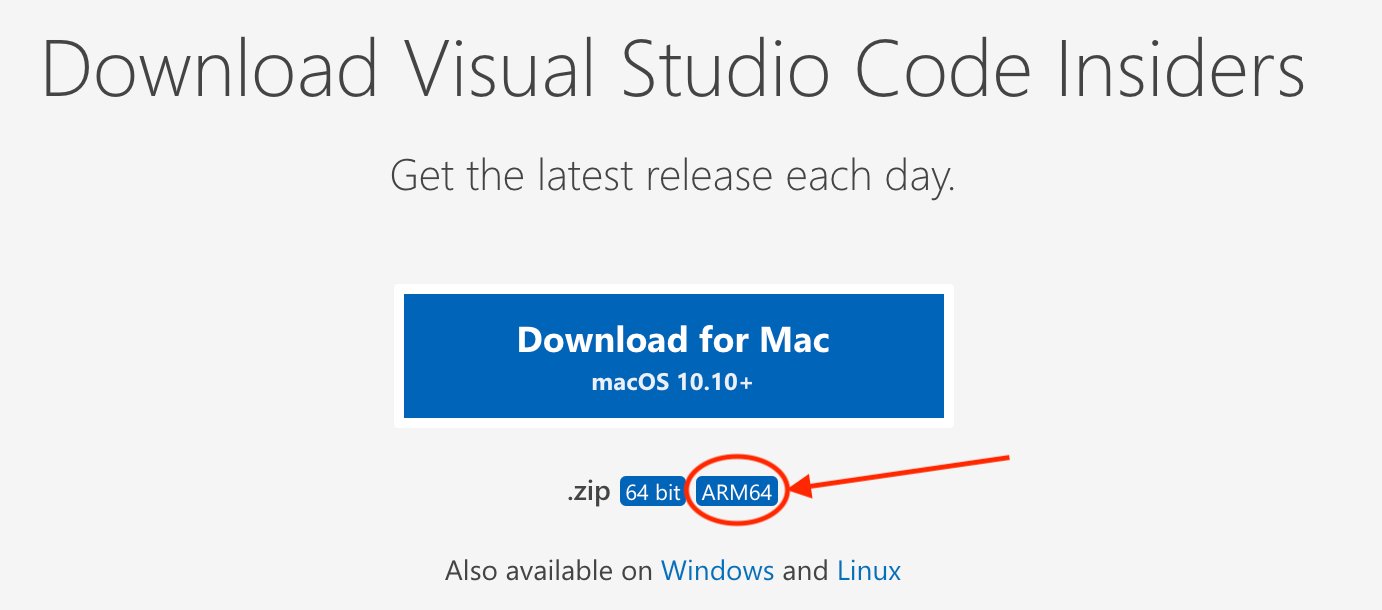

最后,有没有arm64架构的编辑器呢?答案是有的,vscode值得拥有,下载地址:https://code.visualstudio.com/insiders/#osx 一定要选择arm64版的:

解压后直接运行即可,可以在插件商店选择Python和Code Runner,即可开启M1的Python代码编写之旅。

结语:M1芯片的Mac和Python3,简直就是金风玉露,绝配天成。只要撩开M1和开发者们之间的那一层帷幔,等待我们的,就是纵享丝滑的开发感受,还等什么?犹豫只会败北,是时候燃烧灵魂,献出钱包了。